The world of cinema has been revolutionized by computer-generated imagery (CGI), a technological marvel that transports audiences to fantastical realms and brings impossible creatures to life. From pioneering efforts in films like *Vertigo* and *Westworld* to the immersive worlds of *Toy Story*, CGI has continuously pushed visual storytelling boundaries, becoming an indispensable tool for filmmakers across all genres. It allows for creating images unfeasible through other technologies, granting artists the power to craft content without extensive physical sets or numerous actors.

However, for all its transformative capabilities, CGI is far from a foolproof solution. The journey of computer graphics in film features incredible successes, but also significant challenges and, at times, glaring missteps that can pull audiences right out of the cinematic experience. These aren’t always outright failures, but rather inherent technical hurdles and limitations that, when mishandled, lead to moments that are, quite frankly, embarrassing for a production. As fans, we often discuss visual effects triumphs, yet it’s equally important to critically analyze where the illusion falters.

Drawing directly from CGI’s definition and characteristics, we’re diving deep into these persistent challenges. This analysis will explore core technical complexities that can transform cutting-edge visual effects into something artificial, distracting, or even laughable. From the subtle nuances of human anatomy to the physics of cloth, we’ll peel back the layers to understand why certain aspects of computer-generated imagery remain incredibly difficult to perfect, and how these difficulties can lead to cinematic “disasters” in the making.

1. **The Peril of Artificial Figures and Environments**One of the most immediate and immersion-breaking issues in CGI is the creation of “fake figures, renderings, objects, and environments that look artificial and/or stand out against everything else.” This isn’t merely poor lighting; it’s a fundamental failure for digital assets to convincingly mimic reality. Whether a painted-on background or a photo-realistic object lacking tactile qualities, such artificiality instantly shatters disbelief, pulling the audience from the narrative. These visual anomalies remind viewers they are watching a constructed image.

This problem often arises from the inherent complexity of real-world objects. Subtle imperfections, varied surface reactions to light, and minute textural variations are incredibly difficult to replicate perfectly. When these intricate details are overlooked, the resulting CGI appears flat, plasticky, or overtly digital. The visual effect doesn’t just look “off”; it actively distracts, causing viewers to focus on the flawed rendering rather than the unfolding story.

The challenge intensifies when artificial elements interact with live-action footage. Blending real and artificial elements demands meticulous attention to lighting, shadow, perspective, and motion. If a CGI monster is superimposed onto a city scene without accurate environmental interaction, the illusion collapses. This “bad case” means digital enhancements detract, rather than bolster, making the entire sequence feel cheap and unconvincing.

CGI’s ultimate goal is seamless enhancement, achieving invisibility. When figures or environments appear overtly artificial, the technology ironically highlights its presence for the wrong reasons. This reflects a clash between the artistic pursuit of realism and production limitations. Shortcuts in achieving hyper-realism inevitably produce visuals that “stand out against everything else,” not for spectacle, but for glaring artificiality, creating an embarrassing moment for filmmakers.

Read more about: Autonomous Vanguard: Unpacking the Development of Self-Driving Military Vehicles for High-Risk Operations

2. **Navigating the Uncanny Valley**Among the most discussed and psychologically unsettling challenges in computer-generated imagery is the “uncanny valley effect.” This phenomenon refers to the human ability to recognize things that look eerily like humans but are slightly off, evoking revulsion or unease. It’s a specific type of artificiality, preying on our innate sensitivity to human features and expressions. When CGI characters approach photorealism but fall short, they become actively disturbing.

The uncanny valley is particularly problematic for filmmakers creating realistic digital human characters or altering real actors. As the context states, “Unrealistic, or badly managed computer-generated imagery can result in the uncanny valley effect.” This isn’t merely a visual glitch; it’s a deep-seated psychological reaction. Slight discrepancies in anatomy or expression trigger an alarm, signaling something fundamentally “wrong” with the human-like entity on screen.

Achieving true human realism in CGI is an incredibly complex endeavor, given the intricate details of the human body and face. While skeletal animation models are often used, they are “not always anatomically correct,” contributing to this phenomenon. Imperfect anatomical representation and difficulties replicating minute muscle interactions create a breeding ground for this unsettling effect, making digital characters appear rigid or lifeless.

This challenge has propelled advancements like motion capture, where artists use real human footage “to get footage of a human performing an action and then replicate it perfectly with computer-generated imagery so that it looks normal.” This technique aims to infuse digital models with authentic movement. Yet, even with motion capture, if the underlying digital model or final rendering isn’t meticulously integrated, the uncanny valley can still emerge, highlighting the delicate balance required to avoid embarrassing moments.

Read more about: Beyond the Blockbuster Budget: 10 Iconic Movie Props That Secretly Starred in Multiple Films

3. **The Intricacy of Realistic Cloth Simulation**One often-overlooked source of “CGI disasters” is the difficulty in realistic cloth simulation. The context points out: “To date, making the clothing of a digital character automatically fold in a natural way remains a challenge for many animators.” This seemingly simple aspect of realism is incredibly complex, involving “The geometric-mechanical structure at yarn crossing,” “The mechanics of continuous elastic sheets,” and “The geometric macroscopic features of cloth.” When these elements are not accurately simulated, digital garments look stiff, weightless, or unnatural.

The problem lies in fabric’s inherent physics. Real-world cloth drapes, wrinkles, stretches, and flows in response to gravity, movement, and surface interaction. Digitally replicating this requires advanced simulation accounting for countless variables—material properties, thickness, tension, and collision detection with the character model. Simplistic rendering often results in garments that clip through limbs, float unnaturally, or move without expected inertia, immediately betraying their digital origin.

For animators, this means clothing requires painstaking adjustment or simulation, even after character movement is perfected. The phrase “automatically fold in a natural way” highlights this difficulty. It’s about making cloth react believably to every twitch or gust of wind. If a character leaps, their cape should billow; if they sit, their trousers should crease. When these details are inadequate, clothing becomes a visual anomaly, creating a jarring, constructed reality.

This challenge is particularly noticeable in animated features or films with heavy CGI characters. If a digital superhero’s cape behaves like rigid plastic, or a digital princess’s gown lacks elegant flow, the character’s believability suffers. These subtle inaccuracies might not be overtly “disastrous,” but they accumulate, chipping away at immersion and making the digital presentation feel less authentic. This represents a hidden battleground for CGI artists, where failure leads to embarrassing visual footnotes.

4. **The Quest for Anatomical Accuracy in Digital Characters**While many CGI models, particularly in skeletal animation, are designed for visual effect rather than scientific precision, the context makes a crucial distinction: “Computer generated models used in skeletal animation are not always anatomically correct.” This lack of anatomical fidelity can be a significant source of “embarrassing moments” in film, especially for realistic digital characters or creatures. If the fundamental structure—bones, muscles, and their interactions—isn’t plausible, surface-level realism will always feel artificial.

Organizations like the Scientific Computing and Imaging Institute have developed “anatomically correct computer-based models,” underscoring this complex scientific need. When digital models diverge significantly from biological reality, characters’ movements can appear stiff, unnatural, or simply “wrong.” A limb might bend impossibly, or posture lack natural weight, creating subtle anatomical inaccuracies that prevent full immersion. The human eye is incredibly adept at recognizing such discrepancies.

The impact extends beyond fantasy creatures to digital doubles or background characters. If a digital crowd features figures with distorted anatomy or robotic movements due to incorrect skeletal structures, the illusion of a living, breathing world dissipates. This problem becomes acute when CGI characters interact closely with live-action actors, making the digital model stand out as an unconvincing imitation.

Furthermore, the context explains “the lack of anatomically correct digital models contributes to the necessity of motion capture as it is used with computer-generated imagery.” This highlights that even with captured human movement, if the underlying digital armature isn’t anatomically sound, data might not translate perfectly. It’s a foundational issue: if the skeleton beneath the skin isn’t right, sophisticated texturing or animation cannot fully salvage the sense of a genuine, living entity, leading to potential cinematic “disasters” for realism.

5. **Capturing the Nuances of Human Expression and Fine Motor Skills**Perhaps one of the most persistent and publicly scrutinized challenges in CGI, directly contributing to “embarrassing moments,” is the struggle to authentically capture “the infinitesimally small interactions between interlocking muscle groups used in fine motor skills like speaking.” The human face, in particular, is an incredibly expressive canvas, conveying a vast spectrum of emotions through subtle muscle movements, lip shapes, and tongue positions. Replicating this complexity digitally, especially “by hand,” is an almost insurmountable task, and its failure is glaringly evident.

The context explicitly details this difficulty: “The constant motion of the face as it makes sounds with shaped lips and tongue movement, along with the facial expressions that go along with speaking are difficult to replicate by hand.” This explains why many digital human characters often appear stiff, vacant, or unsettling when they speak. The disconnect between a character’s voice and the visual representation of their mouth and face movements is a classic example of CGI going wrong, breaking the illusion entirely.

This critical gap in CGI realism directly feeds into the uncanny valley effect. The human brain is exceptionally attuned to facial expressions and their authenticity. A slightly ofilter smile, a rigid eyebrow furrow, or unnatural eye dart can immediately make a digital character feel artificial. Even when aiming for “photo realism” and “physical realism,” the “function realism in resembling its response to actions” is incredibly hard to achieve for intricate facial interactions during speech and emotional display.

The necessity for “motion capture” is reinforced here, as it “can catch the underlying movement of facial muscles and better replicate the visual that goes along with the audio.” This advanced technique injects real human performance data into digital models, providing the organic, nuanced movements so challenging to create from scratch. Yet, motion capture still requires skilled artists to refine the data, ensuring seamless integration without appearing rigid or artificial; any misstep can lead to a flat, unconvincing digital performance.

The initial half of our deep dive into CGI’s most infamous missteps peeled back the curtain on foundational challenges, revealing why digital creations sometimes fall short of convincing realism. But the journey into the labyrinth of computer graphics doesn’t end there. Beyond the artificial figures and the uncanny valley, several other technical frontiers continue to test the mettle of even the most skilled visual effects artists, often resulting in on-screen moments that are, frankly, unforgettable for all the wrong reasons. Let’s continue our critical analysis, exploring more intricate areas where the digital illusion can crumble under pressure, exposing the persistent obstacles in the quest for cinematic perfection. These next five points delve into the delicate balance required to achieve truly seamless visual effects, underscoring that even with advanced technology, perfection remains an elusive, ever-evolving target. Our critical examination continues with areas that push the boundaries of realism, from digital transformations to the very fabric of simulated environments.

6. **The Delicate Art of Digital De-aging**Digital de-aging has emerged as a fascinating yet profoundly difficult frontier in computer-generated imagery, allowing filmmakers to turn back the clock on actors for flashback scenes or cameos. This highly specialized visual effect involves altering an actor’s appearance to make them look younger, a process that typically relies on a sophisticated blend of facial scanning technologies, motion capture, and meticulous photo references from their youth. While conceptually straightforward, the execution is anything but, as the slightest imperfection can shatter the illusion and plunge audiences into a jarring sense of artificiality. The goal is to make the actor look genuinely youthful, not merely smoothed over or airbrushed.

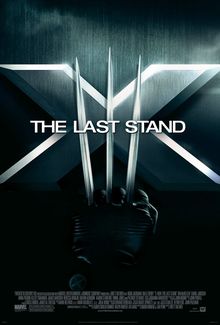

One of the earliest public forays into digital de-aging occurred in Marvel’s *X-Men: The Last Stand*. The film famously utilized this technology on veteran actors Patrick Stewart and Ian McKellen, portraying their iconic characters at a younger age for crucial flashback sequences. The visual effects company Lola VFX, tasked with this pioneering effort, painstakingly smoothed out wrinkles on their faces, using extensive photo references from the actors’ younger days. While groundbreaking for its time, these early attempts often had a visibly artificial quality, a testament to the infancy of the technology and the immense difficulty in seamlessly grafting youth onto an older performance without losing essential character.

Over the years, de-aging technologies have seen remarkable advancements, driven by the continuous pursuit of greater realism. Recent films, such as *Here* (2024), showcase how the field is evolving, portraying actors at significantly younger ages through the use of sophisticated digital AI techniques. This involves scanning millions of facial features and meticulously incorporating a number of them onto actors’ faces to subtly alter their appearance, aiming for a far more convincing transformation than was possible previously. Despite these leaps, the challenge remains to achieve a de-aged face that not only looks anatomically correct but also retains the nuanced emotional performance of the actor, a delicate balance that continues to define this artistic and technical tightrope walk.

Read more about: Digital Immortality or Unsettling Impersonation? The High-Stakes Legal and Ethical Battle Over Actors’ Faces in the CGI Era

7. **The Inherent Limitations of Early CGI Systems**To truly appreciate the “CGI disasters” of today, it’s crucial to understand the foundational struggles of early computer-generated imagery. In its nascent stages, CGI was a far cry from the photorealistic marvels we now expect, often limited to depicting objects composed solely of “planar polygons.” This geometric simplicity meant that early digital creations, while revolutionary for their time, inherently lacked the intricate curves, smooth surfaces, and organic complexity needed to convincingly mimic reality. What was once cutting-edge technology, pushing the boundaries of what was possible on screen, now serves as a stark reminder of the rapid evolution of digital graphics and the inherent limitations of those pioneering systems.

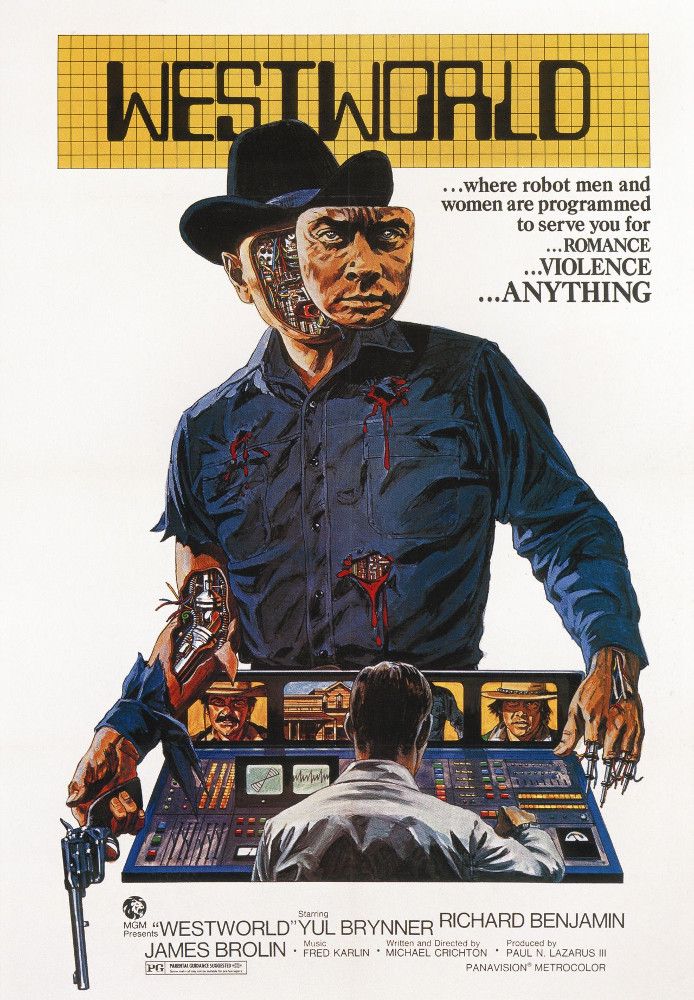

Hollywood’s initial forays into CGI, dating back to the 1970s, showcased these limitations even in landmark films. *Westworld* (1973) offered audiences rudimentary “digital viewpoints,” while *Star Wars* (1977) and *Alien* (1979) incorporated basic “wire-frame models” – visual effects that, by today’s standards, appear jarringly artificial and, yes, even embarrassing. The 1980s saw further progression with films like *Tron* (1982) and *The Last Starfighter* (1984) attempting to create “full models of real-life objects and life-like characters,” yet these were still constrained by the primitive rendering capabilities and computational power available. The visible artificiality wasn’t always a failure of artistic intent, but a direct consequence of technology that simply wasn’t ready to deliver seamless illusions.

Interestingly, the development of CGI in flight simulators often outpaced its application in commercial film, with systems like the Link Digital Image Generator (DIG) by Singer Company pioneering significant advancements. This “real-time, 3D capable, day/dusk/night system” was designed to provide a visual experience that “realistically corresponded with the view of the pilot,” showcasing features like “realistic texture, shading, translucency capabilities, and free of aliasing” years before they were commonplace in cinema. While these advancements eventually trickled into filmmaking, the inherent technological gap meant that early cinematic CGI, even with the best intentions, often looked crude and unconvincing, laying the groundwork for many of the visual “disasters” that audiences now scrutinize with a critical eye.

Read more about: Beyond the Showroom Shine: Unmasking the Controversial Seat Features Driving SUV Owners to Court

8. **The Persistent Challenges of Achieving Realistic Textures and Shading**Beyond the mere shape of a digital object, its surface appearance – how it reacts to light, its perceived texture, and its material properties – is paramount to achieving visual realism. This is where the persistent challenge of realistic textures and shading comes into play, a hurdle that, when not properly overcome, can instantly betray a digital creation as artificial. For instance, rendering human skin convincingly presents a tri-layered quest for realism: achieving “Photo realism in resembling real skin at the static level,” ensuring “Physical realism in resembling its movements,” and demonstrating “Function realism in resembling its response to actions.” Any disconnect across these levels leads to a surface that just looks ‘wrong’.

The microscopic complexity of real-world surfaces, especially organic ones like human skin, poses an enormous technical burden. The context highlights that “the finest visible features such as fine wrinkles and skin pores are the size of about 100 μm or 0.1 millimetres,” an incredibly minute detail that must be accurately replicated. To model this level of intricacy, digital artists often employ highly advanced techniques, such as representing skin as a “7-dimensional bidirectional texture function (BTF) or a collection of bidirectional scattering distribution function (BSDF) over the target’s surfaces.” This technical jargon underscores the depth of scientific understanding and computational power required to make a digital surface truly believable.

When these intricate details are overlooked or inadequately rendered, the consequences are immediate and immersion-breaking. Digital characters and objects can appear plasticky, waxy, or unnaturally smooth, lacking the imperfections and subtle variations that define real-world materials. This failure to convincingly replicate how light interacts with a surface, how textures are perceived, and how materials behave makes the CGI “stand out against everything else,” as noted in our earlier discussion of artificial figures. It’s a fundamental visual misstep that reminds viewers they are observing a constructed image, rather than a living part of the cinematic world.

The ongoing battle for superior texture and shading drives continuous innovation in rendering algorithms and artistic workflows. While modern tools offer unprecedented levels of detail, the sheer computational demands and the artistic skill required to blend these elements seamlessly mean that the pursuit of perfect surface realism remains a constant challenge. When a digital character’s skin or a CGI environment’s rock face lacks convincing texture and realistic light interaction, it transforms from a seamless illusion into an obvious visual effect, becoming an embarrassing moment for the entire production.

9. **The Meticulous Process of Hair and Fur Simulation**Among the most notoriously difficult and computationally intensive elements to simulate in CGI is realistic hair and fur. The inherent complexity lies in the sheer number of individual strands, their collective volume, their interaction with environmental forces, and their natural variations. For a computer-generated model, the process begins by creating “individual base hairs” that are then painstakingly “duplicated to demonstrate volume.” This initial step is critical, as these foundational strands dictate the overall flow and appearance of the entire hair or fur system, setting the stage for either a convincing illusion or a glaring digital artifact.

Achieving believability requires an extraordinary level of detail and variation. The context emphasizes that “The initial hairs are often different lengths and colors, to each cover several different sections of a model,” allowing for organic, non-uniform growth. Pixar’s *Monsters Inc.* (2001) famously illustrated this challenge with the character Sulley, who required “approximately 1,000 initial hairs generated that were later duplicated 2,800 times” to achieve his iconic furry look. The sheer scale is staggering, with “The quantity of duplications [ranging] from thousands to millions, depending on the level of detail sought after,” pushing rendering engines to their absolute limits to process such an enormous amount of geometric data.

However, simply generating millions of hairs isn’t enough; they must also behave physically like real hair or fur. This involves complex simulations accounting for gravity, wind, collisions with the character’s body or other objects, and even the effects of moisture, as exemplified by “Computer-generated wet fur created in Autodesk Maya.” When these physical properties are not accurately replicated, digital hair can appear stiff, clumped unnaturally, float without weight, or clip through the character model. Such visual discrepancies instantly shatter the illusion, transforming what should be a flowing, dynamic element into a rigid, artificial construct that detracts from the character’s overall believability.

Consequently, hair and fur simulation often becomes a battleground where resources are stretched thin, and artistic compromises can lead to less-than-convincing results. While advancements continue to be made, the meticulous nature of this process and the immense computational power it demands mean that imperfect hair and fur remain a common hallmark of “bad CGI.” These visual shortcomings can pull audiences out of the story, making them acutely aware of the digital artifice and leading to those all-too-familiar moments of cinematic embarrassment.

Read more about: Is Your Sim Racing ‘Project’ a Blunder? 14 Digital Rides That Might Be Better Left Scrapped.

10. **The Computational Demands of Real-Time Interactive Visualization in Cinematic Contexts**While much of CGI in film is pre-rendered, meticulously crafted over hours or even days per frame, an equally challenging frontier lies in “interactive visualization.” This involves the real-time rendering of dynamically changing data, allowing users—whether they are filmmakers on set using virtual cinematography or audiences in a virtual world—to view and interact with the data from multiple perspectives. This departure from static, pre-calculated images introduces a new layer of complexity: the imperative for immediate computational efficiency, which often clashes directly with the pursuit of ultimate visual realism, potentially creating its own brand of “CGI disaster.”

At its core, an interactive visualization process is structured around a “data pipeline.” Raw data is first “managed and filtered” into “visualization data,” which is then “mapped to a ‘visualization representation'”—a “renderable representation”—before finally being “rendered as a displayable image.” The critical distinction here is speed: as a user interacts with the system, perhaps by manipulating controls to change their position within a virtual environment, this entire pipeline must execute in fractions of a second. This continuous, immediate feedback loop means that “real-time computational efficiency [is] a key consideration” above almost all else.

The immense computational demands of rendering complex graphics in real-time inevitably lead to trade-offs in visual fidelity. Unlike offline rendering, where supercomputers can spend vast amounts of time perfecting every pixel, real-time systems must prioritize frame rate over raw detail. This can result in simplified geometries, lower resolution textures, less sophisticated lighting and shadows, or reduced particle effects. While these compromises are acceptable in applications like flight simulators, where the primary goal is functional realism and immediate feedback, they can become glaringly apparent and detrimental when interactive elements are intended for high-fidelity cinematic integration.

In cinematic contexts, such as using virtual cameras on a motion-capture stage or interactive pre-visualization for complex sequences, any visible drop in quality due to real-time constraints can be jarring. If the on-set monitor displaying a digital environment or character looks visibly inferior to the final, polished CGI, it disrupts the creative process and can misguide artistic decisions. When these real-time limitations manifest in the final product, perhaps through a sequence initially designed for interactive previz but then poorly upscaled, the lack of refinement becomes a glaring “CGI disaster,” reminding everyone of the significant computational demands and the delicate balance between speed and ultimate visual perfection.

The journey through the world of CGI, from its foundational challenges to its most cutting-edge yet problematic applications, reveals a fascinating paradox. It is a technology of boundless potential, capable of crafting unimaginable worlds and characters, yet it is also a minefield of intricate technical hurdles where even the slightest misstep can shatter the illusion. From the eerie unsettling nature of the uncanny valley to the painstaking simulation of a single strand of hair, each “CGI disaster” serves as a powerful reminder of the delicate balance between technological ambition and the pursuit of seamless, believable artistry.

Understanding these inherent difficulties not only deepens our appreciation for the visual effects triumphs that truly transport us but also sharpens our critical faculties when the digital veil falters. The pursuit of perfect digital illusion is an ongoing saga, one that continues to push the boundaries of science and art, ensuring that while CGI will undoubtedly continue to amaze, it will also, at times, continue to leave us with those memorable, albeit embarrassing, cinematic moments. After all, in the grand tapestry of filmmaking, even the flaws contribute to the story, reminding us of the human touch behind every digital dream, and nightmare.