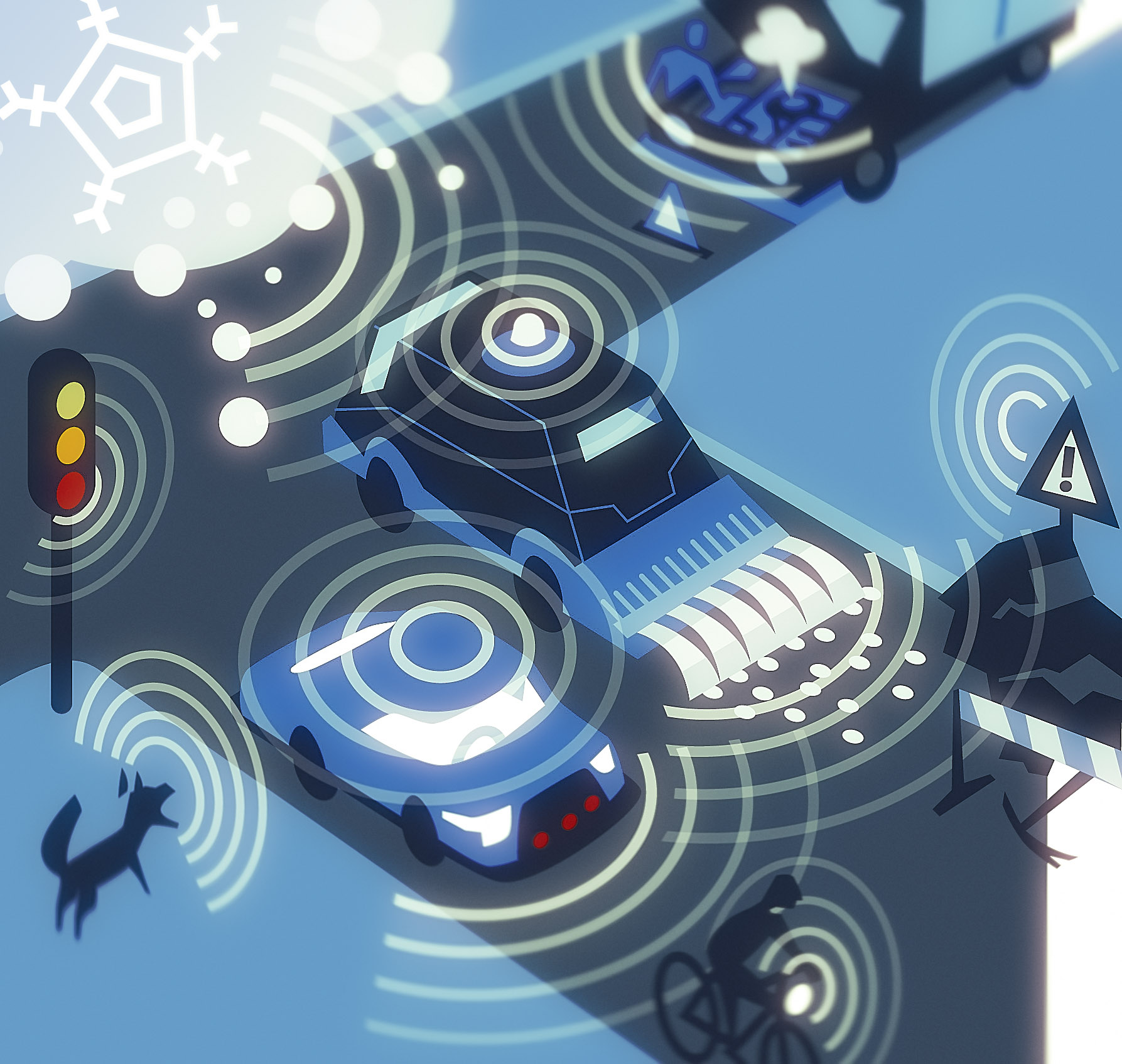

For more than a century, the act of driving has been intrinsically linked to human perception—our eyes, ears, and innate reflexes guiding us through the complexities of the road. Yet, as we stand on the precipice of a new era, marked by the scaled deployment of Level 4 and Level 5 autonomous vehicles in 2025, these biological sensors are rapidly being superseded by an intricate tapestry of advanced technologies. These sophisticated systems, from laser-based lidar scanners to high-definition cameras and radar units that pierce through adverse weather, are meticulously engineered to grant machines an unparalleled, comprehensive, and redundant perception of their surroundings. This transformation isn’t merely an upgrade; it’s a fundamental reimagining of how vehicles understand and interact with the world, moving from human-centric operation to machine intelligence.

The question naturally arises: if human drivers manage with just a pair of eyes and ears, why do driverless cars necessitate a diverse arsenal of up to a dozen different sensor types, strategically mounted around the vehicle? The answer lies squarely in the pursuit of absolute reliability and uncompromised safety. Each sensor technology, while possessing unique strengths that make it invaluable, also comes with inherent weaknesses. Cameras, for example, excel at discerning semantic details like road signs but can be compromised by blinding glare. Radar, conversely, operates with impressive resilience through rain and fog but often lacks the fine-grained resolution needed for detailed environmental mapping. Lidar offers exceptionally precise 3D data, yet its performance can be attenuated by heavy snow and its cost traditionally high. This inherent trade-off necessitates a multi-layered approach, known as multimodal perception, which leverages the complementary strengths of various sensors to ensure no single point of failure can render the vehicle blind or unsafe.

Engineers, therefore, design these systems with redundancy at their core, ensuring that if one sensor’s efficacy is compromised—be it by challenging weather, shifting lighting conditions, or temporary occlusion—another can seamlessly step in to provide critical information. This isn’t just an engineering preference; it’s a regulatory expectation. Safety regulators mandate that driverless cars must degrade gracefully, maintaining a robust understanding of their environment even if a channel of perception is temporarily lost. This fusion of diverse sensor inputs acts as the foundation upon which the trustworthiness and operational safety of these increasingly sophisticated robotaxis and shuttle pods are built, allowing them to navigate complex urban environments with minimal, if any, human intervention. As the industry advances, understanding these individual technological marvels is key to appreciating the larger intelligent mobility ecosystem they create.

1. Lidar: The Backbone of 3D Perception

Among the formidable array of sensors employed in autonomous vehicles, Lidar (Light Detection and Ranging) frequently stands out as the very backbone of their perception capabilities. Its operational principle is elegantly simple yet incredibly powerful: Lidar systems emit laser pulses into the surrounding environment and then meticulously measure the time it takes for these pulses to reflect off objects and return. By processing these return times, a Lidar unit constructs a highly accurate and dense 3D point cloud, essentially creating a real-time, high-definition map of the vehicle’s immediate surroundings. This capability provides a geometric understanding of the world that is unparalleled by many other sensor types, forming the critical foundation for navigation and object detection.

A significant advantage of Lidar over camera-based systems is its independence from ambient light. Unlike cameras, which rely on visible light to form images and can struggle in dim or overly bright conditions, Lidar performs consistently whether it’s the middle of a sunny day or the darkest part of the night. This unwavering performance across all lighting conditions is crucial for autonomous vehicles that must operate reliably around the clock. Companies like Waymo, for instance, utilize multiple lidars in their latest sixth-generation autonomous vehicle platform, strategically positioning them to cover long-, mid-, and short-range distances, thereby enabling the system to detect vehicles hundreds of meters away and simultaneously observe pedestrians close to the curb. Similarly, Zoox integrates lidars into the corners of its vehicles, ensuring comprehensive 360° visibility, a non-negotiable requirement for safe operation in bustling urban landscapes.

The Lidar industry is also undergoing rapid innovation, with current trends in 2025 pointing towards solid-state and FMCW (Frequency-Modulated Continuous Wave) lidars. These next-generation technologies promise not only lower manufacturing costs but also enhanced durability, crucial for mass deployment in robotaxi fleets. Furthermore, FMCW lidars offer the significant advantage of being able to directly measure an object’s velocity, adding another layer of critical data for predictive algorithms. What once cost tens of thousands of dollars is now being produced at scale for a mere fraction of that, making Lidar an increasingly practical and ubiquitous component in the drive towards widespread autonomous mobility. This evolution signifies Lidar’s transition from a high-cost research tool to a viable, scalable solution for real-world applications.

2. Radar: The All-Weather Workhorse, and 4D Imaging Radar

While Lidar excels in generating precise 3D geometric maps of the environment, Radar (Radio Detection and Ranging) carves out its essential niche by providing unmatched robustness in adverse weather conditions. The fundamental principle of radar involves emitting radio waves and then measuring the reflected signals to calculate an object’s speed and distance. Crucially, these radio waves possess a unique ability to penetrate rain, fog, and snow with minimal attenuation, granting autonomous vehicles a consistently reliable method to detect objects even when cameras or lidars might be struggling against environmental obscurities. This makes radar an indispensable component for maintaining situational awareness in challenging driving scenarios.

Traditional automotive radar has long been a staple in advanced driver assistance systems (ADAS), primarily powering features like adaptive cruise control and basic collision avoidance. However, for the intricate demands of full autonomy, a more sophisticated iteration has become essential: next-generation 4D imaging radar. These advanced radar units transcend the capabilities of their predecessors by not only measuring range and velocity but also adding vital elevation and high-resolution angular data. The result is a richer, more detailed environmental picture, producing something akin to a low-resolution 3D image, significantly improving object classification and tracking capabilities.

Within a driverless car’s perception stack, 4D imaging radar plays a crucial role in tracking moving objects—be they other vehicles, cyclists, or pedestrians—and in accurately predicting their velocity and trajectories. This predictive capability is paramount for safe navigation, allowing the autonomous system to anticipate movements and plan its actions accordingly. When data from 4D imaging radar is seamlessly fused with inputs from lidar and camera sensors, it creates a robust and redundant perception system. This fusion ensures that the vehicle maintains continuous awareness of fast-approaching objects, even in the midst of a severe storm, mitigating the risks posed by sudden, unexpected events on the road. The integration of 4D radar is a significant step towards truly all-weather autonomous operation.

3. Cameras: The Semantic Eyes of the Vehicle

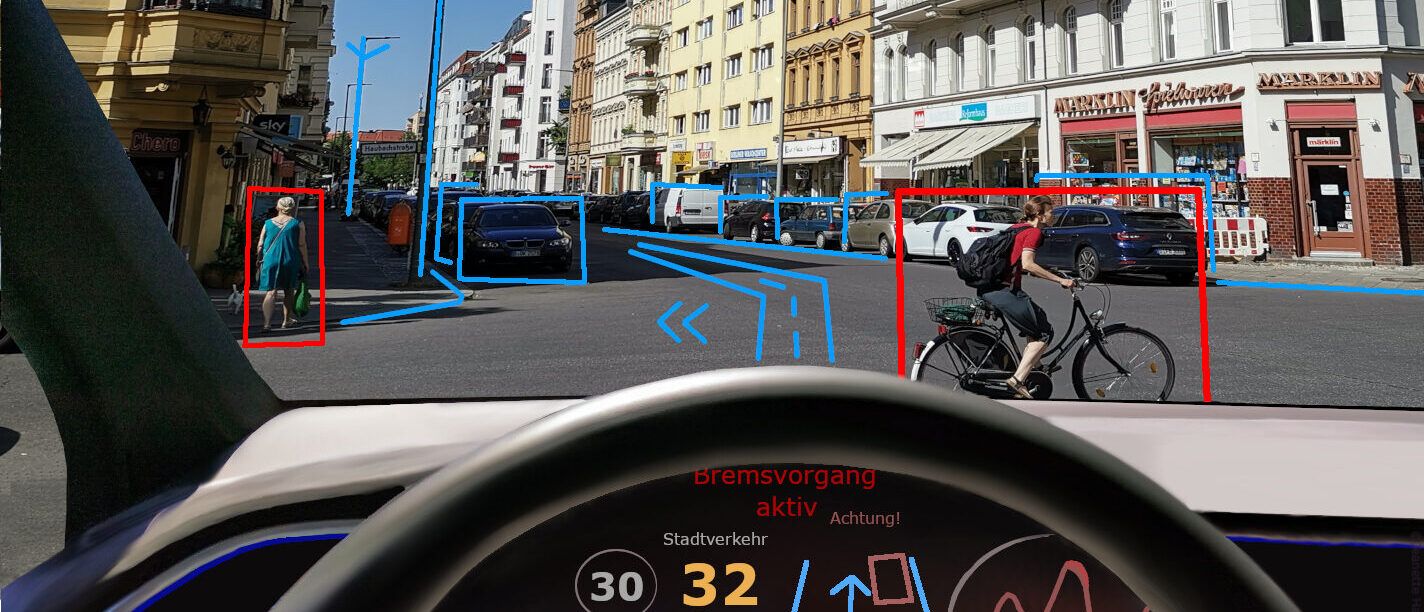

If Lidar and Radar provide the crucial structural and motion data of the environment, cameras contribute an equally vital dimension: semantics. Cameras are the “eyes” that allow a driverless car to recognize and interpret the meaning of road scenes. They are adept at identifying traffic lights, deciphering stop signs, following lane markings, spotting construction cones, and even picking up on subtle, dynamic cues such as a pedestrian’s body language. This ability to understand the symbolic rules and nuanced visual information of the road is indispensable for safe and context-aware navigation, transforming raw geometric data into actionable intelligence.

Modern driverless cars are equipped with an array of highly sophisticated camera technologies to accomplish this complex task. This includes high-dynamic-range (HDR) cameras, specifically designed to handle extreme lighting conditions—from harsh sunlight to deep shadows—without losing detail. Beyond this, a variety of wide-angle and telephoto lenses are strategically deployed to capture comprehensive details across varying distances, ensuring both broad situational awareness and the ability to zoom in on specific elements. Waymo’s robotaxis, for example, are known to feature over a dozen cameras meticulously placed around the vehicle to achieve complete 360-degree coverage, illustrating the sheer volume of visual information processed.

Further enhancing their capabilities, some fleets integrate specialized cameras to overcome specific visual challenges. Polarized cameras are utilized to reduce glare from wet roads, improving visibility in rainy conditions, while near-infrared (NIR) cameras extend the vehicle’s sight in low-light environments, beyond what visible light cameras can achieve. Without the rich semantic data provided by these advanced camera systems, a driverless car would struggle immensely to interpret the dynamic and often nuanced symbolic rules of the road, severely limiting its ability to operate safely and predictably in complex human-centric environments. Their role is to give the vehicle a deep understanding of context, not just geometry.

4. Thermal and Infrared Cameras: Seeing the Unseen

A progressively more common addition to the sophisticated sensor suite of autonomous vehicles in 2025 is thermal infrared imaging. Unlike traditional visible light cameras that capture images based on reflected light, thermal sensors operate by detecting heat signatures emitted by objects. This fundamental difference grants them a unique and powerful capability: they can effectively “see” in conditions where visible light is scarce or obscured, making them exceptionally valuable for spotting pedestrians, animals, or cyclists in pitch darkness, through smoke, or even through the glare of bright headlights.

The efficacy of thermal cameras in enhancing nighttime detection has been rigorously demonstrated through various studies. Research has consistently shown that combining thermal images with data from conventional RGB (color) cameras dramatically improves the accuracy of object detection during low-light conditions. This fusion of thermal and visual information provides a more robust and reliable perception layer, significantly reducing the likelihood of missed detections that could lead to accidents. The ability to perceive heat regardless of illumination makes thermal cameras a vital redundancy, especially for vulnerable road users who might otherwise be invisible.

Given these compelling advantages, automakers are increasingly integrating thermal cameras as a standard feature in urban robotaxis. This adoption is particularly pronounced in cities characterized by high nighttime pedestrian activity, where the risk of accidents involving humans is elevated after dark. By providing an additional, independent channel of perception that is impervious to common visual challenges, thermal and infrared cameras significantly bolster the overall safety profile of autonomous vehicles, ensuring they can navigate even the most challenging low-light scenarios with enhanced awareness and precision. They truly enable the vehicle to see the unseen, adding a critical layer of safety.

5. Ultrasonic Sensors: Close-Range Guardians

While Lidar, Radar, and cameras are diligently managing long- and mid-range perception for autonomous vehicles, a different set of sensors steps in to take charge of the immediate, near-field environment: ultrasonic sensors. These compact and cost-effective devices operate on a principle similar to sonar, emitting high-frequency sound waves and then calculating the time it takes for these waves to return after reflecting off an object. This precise measurement of return time allows them to accurately detect objects at very short distances, making them indispensable for maneuvers that require extreme proximity awareness.

Ultrasonic sensors are the unsung heroes of tight maneuvering, primarily used for tasks like parking assistance and detecting small obstacles. They are crucial for navigating crowded parking lots, fitting into narrow spaces, or safely approaching curbs. For example, the familiar rear parking sensors that emit a series of beeps as you get closer to an object in a consumer car are typically ultrasonic. In the context of fully driverless cars, their precision in detecting objects at close range is paramount, preventing low-speed collisions and ensuring the vehicle can dock or park with millimeter accuracy.

Despite some consumer car manufacturers exploring vision-only alternatives for close-range detection, ultrasonic sensors maintain their significant value in fully driverless cars. Their direct measurement of distance, often unaffected by lighting conditions or certain surface characteristics that might challenge cameras, makes them a reliable guardian of the vehicle’s immediate perimeter. Crucially, for robotaxis where passenger safety around vehicle doors and in tight operational spaces is paramount, the consistent and precise feedback from ultrasonic sensors remains an essential component of the redundant safety architecture, providing a dedicated layer of protection for intricate, close-quarters movements.

6. GNSS, RTK, IMU, and Wheel Encoders: Knowing Where You Are Precisely

Perception of the environment, no matter how sophisticated, is rendered largely useless without an equally precise understanding of the vehicle’s own location—its “where.” To achieve this crucial localization, sensors in driverless cars rely on a sophisticated fusion of technologies, beginning with Global Navigation Satellite Systems (GNSS), such as GPS. However, standard GPS alone, while foundational, is often insufficient for the exacting demands of urban autonomous driving due to signal reflections caused by tall buildings, which can introduce significant errors in positioning.

To overcome the limitations of standard GPS, autonomous fleets employ RTK (Real-Time Kinematic) corrections. RTK technology significantly enhances positioning accuracy, narrowing it down to within a few centimeters, which is vital for lane-level precision. This high-accuracy satellite data is then further combined with Inertial Measurement Units (IMUs), which are sophisticated sensors comprising gyroscopes and accelerometers. IMUs continuously track the vehicle’s angular rotation and linear acceleration, providing critical data for motion analysis and understanding how the car is moving through space, irrespective of external signals.

Completing this powerful localization triad are wheel encoders, which accurately measure the distance traveled by the vehicle. By continuously monitoring the rotation of each wheel, these encoders provide precise odometry data. The genius lies in fusing these diverse inputs: GNSS for global positioning, RTK for centimeter-level accuracy, IMUs for dynamic motion tracking, and wheel encoders for distance traveled. This multimodal fusion allows an autonomous car to accurately dead-reckon its path and maintain a precise understanding of its location even when satellite signals are temporarily lost, such as when traversing tunnels, parking structures, or navigating dense city centers with limited sky visibility. This integrated approach ensures uninterrupted, high-precision localization, a cornerstone of safe and reliable autonomous operation.

7. HD Maps: The Invisible Sensor

Beyond the physical hardware dedicated to perceiving the immediate environment, a profoundly critical yet often-overlooked component in autonomous vehicles is the high-definition (HD) map. These are far more sophisticated than consumer-grade navigation maps, engineered for centimeter-level accuracy and loaded with an incredibly rich layer of detailed information. They meticulously document precise road geometry, intricate lane-level markings, the exact locations of traffic signals, and even subtle nuances such as curb heights. This foundational digital blueprint provides a static yet exquisitely precise understanding of the world, forming a critical context for dynamic sensor inputs.

The true strategic value of HD Maps lies in their role as a robust prior knowledge layer for the autonomous system. This means the car does not have to rediscover and interpret every single detail of the road from scratch. Instead, it seamlessly aligns its real-time, live sensor data against this pre-existing, highly accurate digital twin of the environment. This significantly streamlines the computational burden on the vehicle’s onboard processors and enhances the reliability and speed of its perception and planning systems. To ensure currency, companies like Mobileye employ innovative methods, leveraging data crowdsourced from millions of consumer vehicles, perpetually refreshing maps to reflect recent changes.

8. External Microphones: Listening to the Road

Driverless cars are designed not only to see but also to listen, a crucial auditory capability facilitated by external microphone arrays, sometimes called “EARS.” These sophisticated acoustic sensors are meticulously designed to detect specific sounds from the environment, particularly those indicative of urgent situations. Their primary and most vital function is the detection of emergency vehicle sirens and horns, allowing the autonomous vehicle to register their presence and direction long before they might become visually apparent.

For instance, companies like Waymo integrate microphones into their vehicles specifically to determine the precise direction of an approaching ambulance or fire truck. This foresight allows the autonomous system to react proactively, yielding right-of-way well in advance. By incorporating this auditory layer of perception, driverless vehicles are empowered to behave not just predictably, but as genuinely courteous and responsive road users within the often-unpredictable tapestry of urban environments, enhancing situational awareness and safe coexistence.

9. V2X Communication: Talking to the World

Autonomous vehicles extend their “senses” beyond onboard perception to include direct communication with their environment, a capability known as Vehicle-to-Everything (V2X) communication. This groundbreaking technology allows cars to exchange vital information seamlessly with infrastructure, vulnerable road users, and other vehicles. V2X creates a shared digital awareness, transcending the line-of-sight limitations inherent in even the most advanced physical sensors. Imagine a driverless car approaching an intersection where an ambulance is coming from around a blind corner. V2X could provide an instant, invaluable warning, alerting it to the emergency long before onboard sensors detect it.

This pre-emptive intelligence is a game-changer for collision avoidance and proactive navigation, significantly enhancing safety margins. The foundational regulatory frameworks for V2X are also rapidly falling into place; in 2024, spectrum was cleared for Cellular V2X (C-V2X), marking a pivotal step toward its broader deployment in 2025. As more cities adopt connected infrastructure, V2X will undoubtedly mature into a crucial complement to the vehicle’s onboard sensors, enabling truly safer and more cooperative driving experiences.

10. Interior Sensing Systems: Monitoring Driver and Occupant

While external sensors monitor the world outside, an equally critical frontier in automotive safety and intelligence lies within the cabin: interior monitoring systems. These encompass both Driver Monitoring Systems (DMS) and Occupant Monitoring Systems (OMS), representing a sophisticated evolution in understanding human-machine interaction. These systems enhance safety by assessing the state of the driver and the behavior of all occupants, moving towards a more adaptive and personalized in-cabin experience.

A pivotal leap in this domain, announced in late 2025, comes from STMicroelectronics in collaboration with Tobii, commencing mass production of an advanced interior sensing system. This innovative solution efficiently manages both DMS and OMS functionalities using a single-camera approach. At its core is STMicroelectronics’ VD1940 image sensor, a high-resolution 5.1-megapixel device with a hybrid pixel design. This allows the sensor to be exceptionally sensitive to both RGB (color) light for optimal daytime operation and infrared (IR) light for robust, continuous performance in low-light or nighttime conditions. Its wide-angle field of view covers the entire cabin, capturing high-quality images.

Tobii’s cutting-edge algorithms then process these dual video streams, extracting crucial data points for real-time assessment of driver attention, detecting signs of distraction or fatigue, and monitoring broader occupant behavior. This integrated single-camera solution contrasts sharply with previous approaches requiring multiple sensors. By unifying DMS and OMS, STMicroelectronics offers carmakers a more cost-efficient, streamlined, and easier-to-integrate solution without compromising on the critical performance or accuracy needed for safety-critical applications. The DMS component, for example, directly addresses human error, a significant cause of accidents, by tracking driver attention.

Complementing the DMS, the Occupant Monitoring System (OMS) extends this watchful intelligence to all passengers. OMS can detect occupant presence, position, and size for intelligent airbag deployment and restraint adjustments. Beyond fundamental safety, OMS enables personalized in-cabin experiences, from adjusting climate control based on presence to recognizing gestures or detecting child behavior for enhanced protection. These advancements promise unparalleled safety and comfort, though they also open dialogues around data privacy and the ethical implications of continuous surveillance within a private space.

11. Sensor Fusion: The Orchestration of Intelligence

The true genius behind modern autonomous vehicles isn’t just the sheer number or sophistication of individual sensors, but the intricate process of “sensor fusion.” This is the sophisticated art of combining data from various disparate sensors – such as lidar, radar, and cameras – to produce a single, more accurate, reliable, and holistic understanding of the vehicle’s environment. Sensor fusion acts as the digital “glue” that binds all these individual perception channels into a coherent, predictive brain, providing a level of trustworthiness and operational safety that no single sensor could achieve on its own.

The fundamental necessity for sensor fusion stems directly from the inherent trade-offs of each sensor technology. Cameras excel at semantic interpretation but are vulnerable to glare, while radar thrives in adverse weather but lacks high-resolution detail. Lidar offers precise 3D geometry but can be affected by heavy snow. Sensor fusion brilliantly leverages these complementary strengths, ensuring that if one sensor’s efficacy is compromised, another can seamlessly step in to provide critical, cross-verified information. This redundancy is not merely an engineering preference; it’s a regulatory expectation, ensuring vehicles degrade gracefully.

This multimodal fusion is evident across real-world autonomous fleets. Waymo relies on a rich mix of 13 cameras, 4 lidars, 6 radars, and external microphones. Zoox strategically places lidar and radar units at the corners of its bidirectional vehicles for comprehensive, overlapping coverage. A consistent pattern holds true across the industry in 2025: no major robotaxi fleet relies solely on a single type of sensor, underscoring the absolute criticality of diversified perception for robust operation. Beyond deployment, rigorous manufacturing and calibration, including specialized tunnels and artificial rain bays, are equally challenging but essential for safety and reliability.

The debate between “sensor-rich” versus “vision-only” approaches persists. Tesla champions vision-only, arguing neural networks can interpret the world with cameras alone. Waymo and Zoox firmly advocate for sensor redundancy, asserting it’s essential for unwavering safety and reliability in unpredictable urban environments. While sensor-rich suites add to cost, vision-only approaches have yet to consistently prove the same robust reliability under all conditions, especially in complex scenarios. This fundamental disagreement highlights the varying philosophies in achieving full autonomy.

Looking toward the horizon, the future of sensor technology and fusion is marked by transformative trends: widespread adoption of FMCW lidar and 4D imaging radar, wider acceptance of thermal cameras, continuously refreshed dynamic HD maps, and cooperative perception via V2X. The ambitious concept of quantum sensors also looms, poised to revolutionize the automation industry. Challenges persist in refining AI algorithms, ensuring data privacy, and developing industry standards. However, the convergence of advanced sensor capabilities with powerful in-vehicle AI processors promises to unlock unprecedented levels of vehicle intelligence and autonomy.

The journey into the unseen tech that powers our future cars reveals a fundamental truth: fully driverless vehicles will not succeed because of one miraculous sensor, but because a multitude of sophisticated senses cooperates, cross-checks, and meticulously covers each other’s blind spots. It is the seamless interplay of high-resolution lidars, all-weather radars, semantic cameras, thermal imagers, and precise ultrasonic guardians, all bound together by localization systems and HD Maps, that forms this robust perception stack. Added layers of external microphones for auditory awareness and V2X communication for proactive dialogue glue this entire system into a coherent, predictive brain.

Indeed, the 2025 state of the art in autonomous technology is unequivocally sensor-rich and fusion-heavy, with increasing support from advanced 4D radar, thermal imaging, dynamic HD maps, and V2X communication. This represents a profound shift in AI history, bringing advanced computational intelligence into real-world, safety-critical applications, tangibly enhancing human safety and convenience. The long-term impact will transform the driving experience, making vehicles more than mere modes of transport; they are evolving into intelligent, adaptive co-pilots and personalized mobile environments. As the global race to equip vehicles with the most sophisticated “senses” intensifies, the future promises a safer, smarter, and profoundly more autonomous era of mobility.